Last year, I spent a few weeks dabbling in machine learning, which remains an interesting area to explore though not directly related to my day-to-day work. Although the economics generally work in favor of doing ML in the cloud, there’s something to be said for having all of your code and data local and not having to worry about shutting down virtual hosts all the time. My 10+ year old PC just doesn’t cut it for ML tasks, and so I made a new one.

The main requirements for me are lots of cores (for kernel builds) and a hefty GPU or four (for ML training). For more than two GPUs, you’re looking at AMD Threadrippers; for exactly two you can go with normal AMD or intel processors. The Threadrippers cost about $500 more factoring in the motherboard. I decided that chances of me using more than two GPUs (or even more than one) were pretty darn slim and not worth the premium.

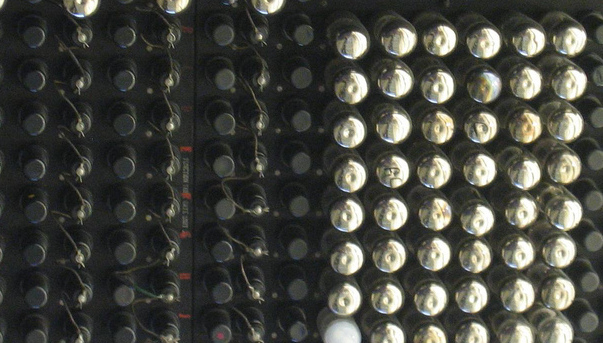

In the end I settled on a 12-core Ryzen 9 3900X with RTX 2070 GPU coming in around $1800 USD with everything. Unfortunately, in this arena everything is marketed to gamers, so I have all kinds of unasked-for bling from militaristic motherboard logos to RGB LEDs in the cooler. Anyway, it works.

Just to make up a couple of CPU benchmarks based on software I care about:

filling a 7x7 word square (single core performance) ~~~~~~~~~~ old: real 0m10.689s user 0m10.534s sys 0m0.105s new: real 0m2.274s user 0m2.243s sys 0m0.016s allmodconfig kernel build with -j $CORES_TIMES_TWO (multicore performance) ~~~~~~~~~~ old: real 165m11.219s user 455m42.557s sys 135m37.557s new: real 9m31.778s user 193m31.477s sys 23m19.117s

This is all with stock clock settings and so on. I haven’t tried ML training yet, but the speedup there would be +inf considering it didn’t work at all on my old box.