While looking at some kmalloc statistics for my little corner of wireless, I found a new tool for the toolbox: perf kmem. To test it out, I ran a simulated connected 25-node mesh in mac80211_hwsim.

Here’s what perf kmem stat --caller had to say:

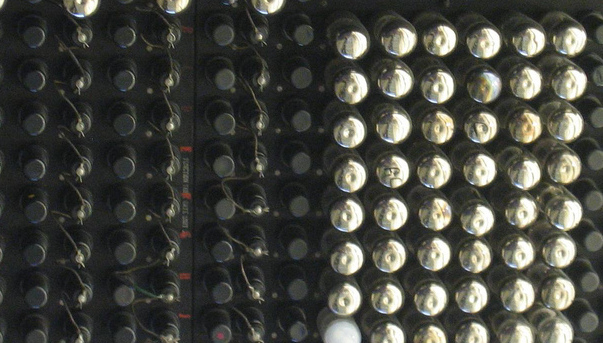

--------------------------------------------------------------------- Callsite | Total_alloc/Per | Total_req/Per | Hit | ... --------------------------------------------------------------------- mesh_table_alloc+3e | 1600/32 | 400/8 | 50 | mesh_rmc_init+23 | 204800/8192 | 102600/4104 | 25 | mesh_path_new.const | 315904/512 | 241864/392 | 617 | [...]

The number of mesh nodes shows up in the ‘Hit’ column in various ways: we can pretty easily see that mesh_table_alloc is called twice per mesh device, mesh_rmc_init is called once, and mesh_path_new is called at least once for every peer (617 ~= 25 * 24). We might scan the hit column to see if there are any cases in our code that perform unexpectedly high numbers of allocations, signifying a bug or poor design.

The first two columns are also interesting because they show potentially wasteful allocations. Taking mesh_table_alloc as an example:

(gdb) l *mesh_table_alloc+0x3e

0x749fe is in mesh_table_alloc (net/mac80211/mesh_pathtbl.c:66).

61 newtbl = kmalloc(sizeof(struct mesh_table), GFP_ATOMIC);

62 if (!newtbl)

63 return NULL;

64

65 newtbl->known_gates = kzalloc(sizeof(struct hlist_head), GFP_ATOMIC);

66 if (!newtbl->known_gates) {

67 kfree(newtbl);

68 return NULL;

69 }

70 INIT_HLIST_HEAD(newtbl->known_gates);

We are allocating the size of an hlist_head, which is one pointer. This is kind of unusual: typically the list pointer is embedded directly into the structure that holds it (here, struct mesh_table). The reason this was originally done is that the table pointer itself could be switched out using RCU, and a level of indirection for the gates list ensured that the same head pointer is seen by iterators with both the old and new table. With my rhashtable rework, this indirection is no longer necessary.

To track allocations with 8-byte granularity would impose an unacceptable level of overhead, so these 8-byte allocations all get rounded up to 32 bytes (first column). Because we allocated 50 of them (25 devices, two tables each), in all we asked for 400 bytes, and actually got 1600, a waste of 1200 bytes. Forty-eight wasted bytes per device is generally nothing worth worrying about, considering we usually only have one or two devices — but as this kmalloc is no longer needed, we can just drop it and also shed a bit of error-handling code.

In the case of mesh_rmc_init, we see an opportunity for tuning. The requested allocation is the unfortunate size of 4104 bytes, just a bit larger than the page size, 4096. Allocations of a page (so-called order-0 allocations) are much easier to fulfill than the order-1 allocations that actually happen here. There are a few options here: we could remove or move the u32 in this struct that pushes it over the page size, or reduce the number of buckets in the hashtable, or switch the internal lists to hlists in order to get this to fit into 4k, and thus free up a page per device. The latter is the simplest approach, and the one I chose.

I have patches for these and a few other minor things in my local tree and will send them out soon.