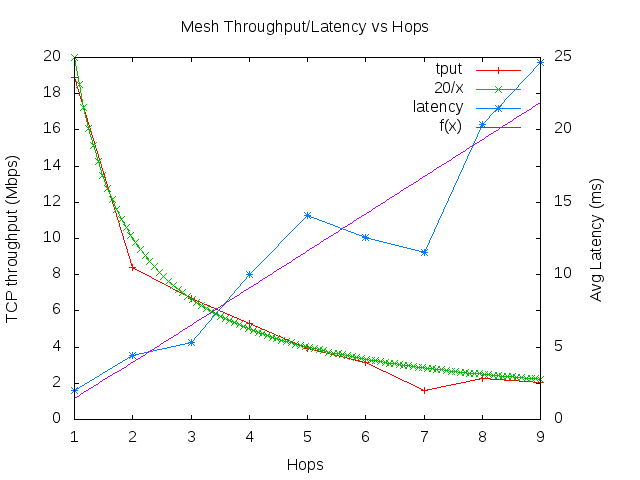

With the addition of per-link signal levels that I added over the weekend, my wmediumd fork leveled up from “mere curiosity” to “potentially useful someday.” For the case of mesh, this means you can use signal levels to inform how HWMP will create the mesh paths.

For example, as a test I was able to validate this fix, by setting up a virtual 4-node mesh with a bad path and a good path. With the patch reverted, the bad path was almost always selected due to its PREQs being received at the target first. [In actuality, this test will exhibit frequent path swapping because the order in which the PREQs are received is essentially random, a finding in “Experimental evaluation of two open source solutions for wireless mesh routing at layer two” by Garroppo et al. Wmediumd doesn’t show this yet because frames are mostly received and queued in-order. At the time of the patch, I validated it in an actual 15-node mesh.]

There are still a couple of things that would be nice to have here. Today, we base the decision on whether a multicast frame is received by the signal level from the transmitter to us and the multicast rate. However, this means that with a low multicast rate, there is basically zero frame loss. In real life, loss happens much more frequently, and so we cannot test the effects of lost path request frames in wmediumd, which is the subject of at least one pending HWMP patch. Another problem is that the current setup works only with static setups; we might be interested in what happens with mobile nodes, for example. For that we’d need to be able to change the signal level periodically; how to easily specify that is a bit of a question mark.