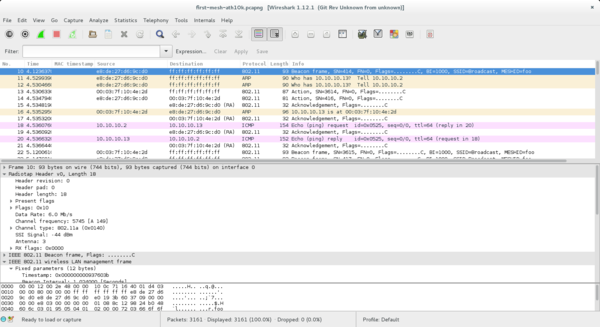

For a while now, I’ve wanted an easy way to decrypt a mesh packet capture when I know the SAE passphrase. This would be quite handy for debugging.

I reasoned that, if I knew the shared secret, and had captured the over-the-air exchange, it should be possible to make such a tool, right? We know everything each station knows in that case, don’t we?

So I spent a bit of time last week reimplementing SAE in python to come to grips with its various steps. And I found my reasoning was flawed.

Similarly to Diffie-Hellman, SAE exchanges values that include the composition of a random value (we’ll call them r1 and r2) with some public part P, in such a way that it is hard to extract r1 even if you know P (e.g. r1*P and r2*P with ECC, although this is not exactly how SAE is specified). These values can be used by each peer to arrive at a shared secret provided they know the original random number (e.g., something like r1 * r2 * P). The crucial point is that r1 and r2 cannot be determined from the exchange alone. They exist only in memory on each peer during the exchange.

So if the secrecy doesn’t actually depend on the password, what is it there for? The answer is authentication: SAE is a password authenticated key exchange (PAKE). The password ensures that the party we are talking to is who we think it is, or at least someone who also knows the password. In the case of SAE, P is derived from the password.

As for the original goal, what we can do instead is use the CCMP keys from a wpa_supplicant log and decrypt frames with those. That is not nearly as convenient, though.

And thus my postings for 2015 are at an end; happy new year, all!